Welcome to today’s Hill & Knowlton.

The original strategic communications consultancy, we were born a public relations pacesetter.

Nearly a century later, Hill & Knowlton is built for purpose...

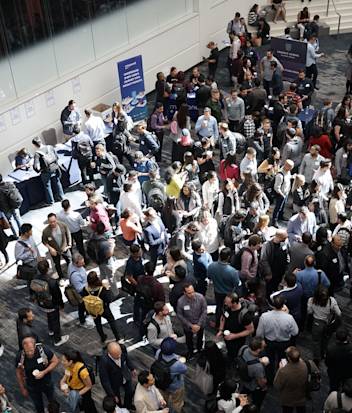

Bringing together new combinations of people and intelligence.

Addressing today’s grand challenges

Expanding our clients’ possibilities and success.

We are the global strategic communications leader for transformation, helping clients communicate to lead.

Your partner

for today’s communications era

Expertise redefined to meet the challenge of change

To lead in today’s dynamic world, brands and businesses need integrated, diverse perspectives that reflect a range of stakeholder experience, sector expertise, creative strategy and executional agility. Hill & Knowlton’s suite of integrated solutions is different by design.